Agentic platform for industrial AI

Turning an idea into a market-winning product — 0 to 1 design of an industrial AI agent building platform.

Turning an idea into a market-winning product — 0 to 1 design of an industrial AI agent building platform.

Back in the summer of 2024, when AI agents were still mostly in the shadows, Cognite chose to bet early and launch an industrial AI agent offering.

Industrial use cases are complex with a very low margin for error — steeped in domain context from organizational data & tribal knowledge, with users who have a low threshold for fancy tech that doesn't perform reliably. This new offering had to prove ROI on agentic systems beyond the hype, while carving a clear, differentiated position in a rapidly evolving market.

I contributed directly to the agent architecture — separating agents and tools so AI complexity is abstracted into reusable tools, while agents stay focused on the domain task the industrial user defines. This made the platform navigable for SMEs without requiring AI expertise.

I ensured the cross-functional team always had clarity on the core user journeys to support and the moments of value to deliver — preventing drift while technology and market shifted rapidly beneath us.

From UX to product positioning to sales — these design principles were the guardrails that drove every decision across product layers, giving us a streamlined focus across the company.

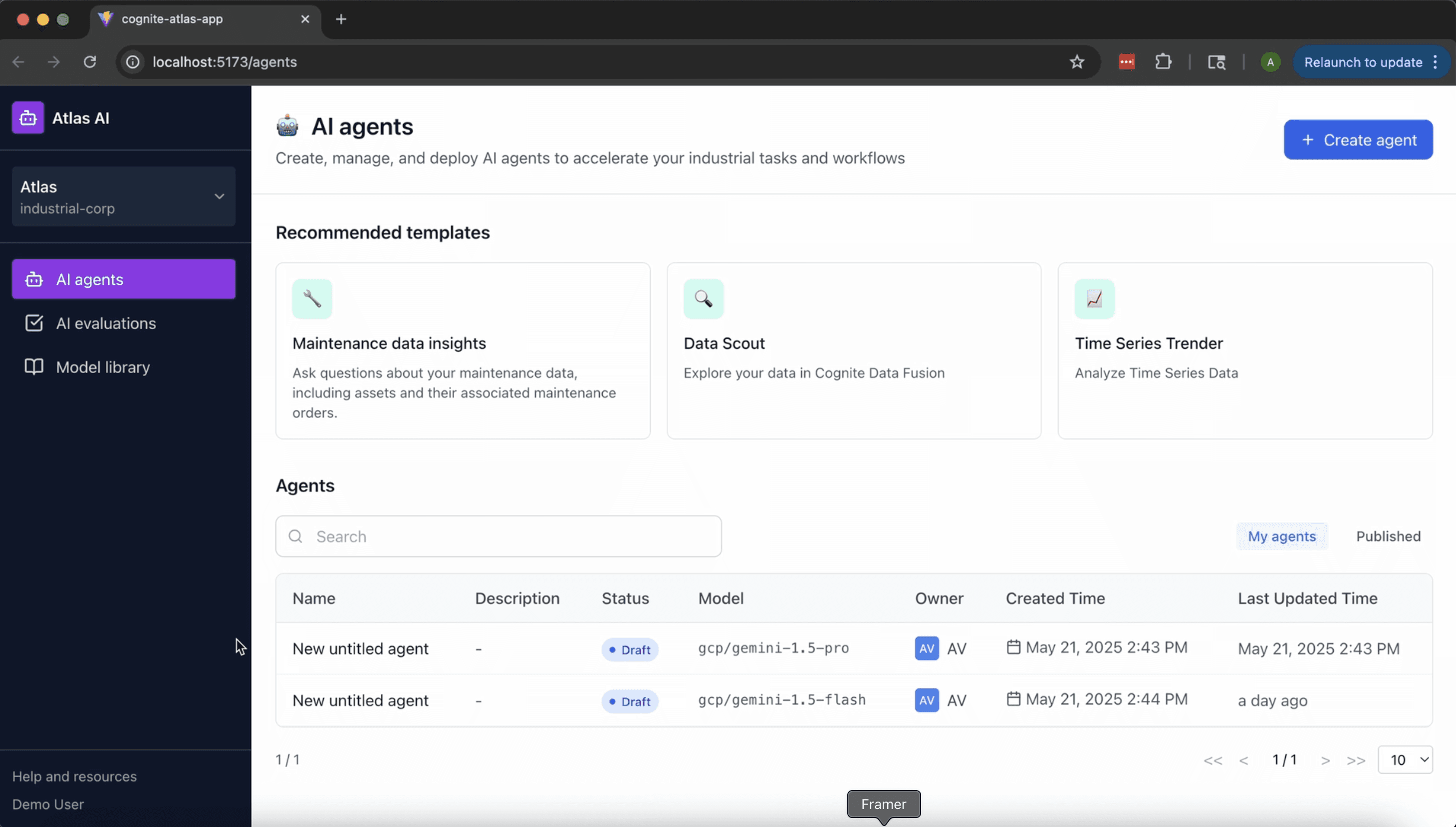

Atlas AI agents are built by groups of industrial SMEs for collective use — not individual agent building & consumption.

The platform is designed ground-up for iteration. Production-grade AI agents are never built in one round — they are matured with rigor.

Building an AI agent requires different skill sets working together — data expertise, industrial context, and LLM knowledge.

Build, test, publish, access control, monitor, evaluate — all designed as first-class UX for enterprise readiness.

Agent Chat UX

Agent chat UX spans multiple design layers simultaneously. Industrial users move fluidly between exploration, analysis, and creation — and agents need to fit that rhythm, not interrupt it. That shapes decisions at every level: where invocation happens, how tasks are handed between agents, and how each response signals what it knows, what it used, and whether to trust it.

The prototype follows a single investigation: a pressure threshold breach, evidence surfaced inline per response, a second agent pulled in mid-thread without restarting the conversation.

01 — Response anatomy

How well did the agent understand the ask? Did it bring relevant information? Can the user trust it enough to act — and course-correct if not? Inline entity tokens, tabbed evidence cards by data type, and a persistent "Reasoning" link each answer a different part of that question, without the user needing to leave the response to find out.

02 — Multi-agent conversation

Industrial workflows don't map to a single agent. An investigation starts with one agent and may need to pull in two more — without the user losing the thread they've built. Agents are invoked at the point of need, mid-conversation, not selected upfront. And beyond invocation, users need to know what context the agent already has, and what actions are available to them — without having to guess.

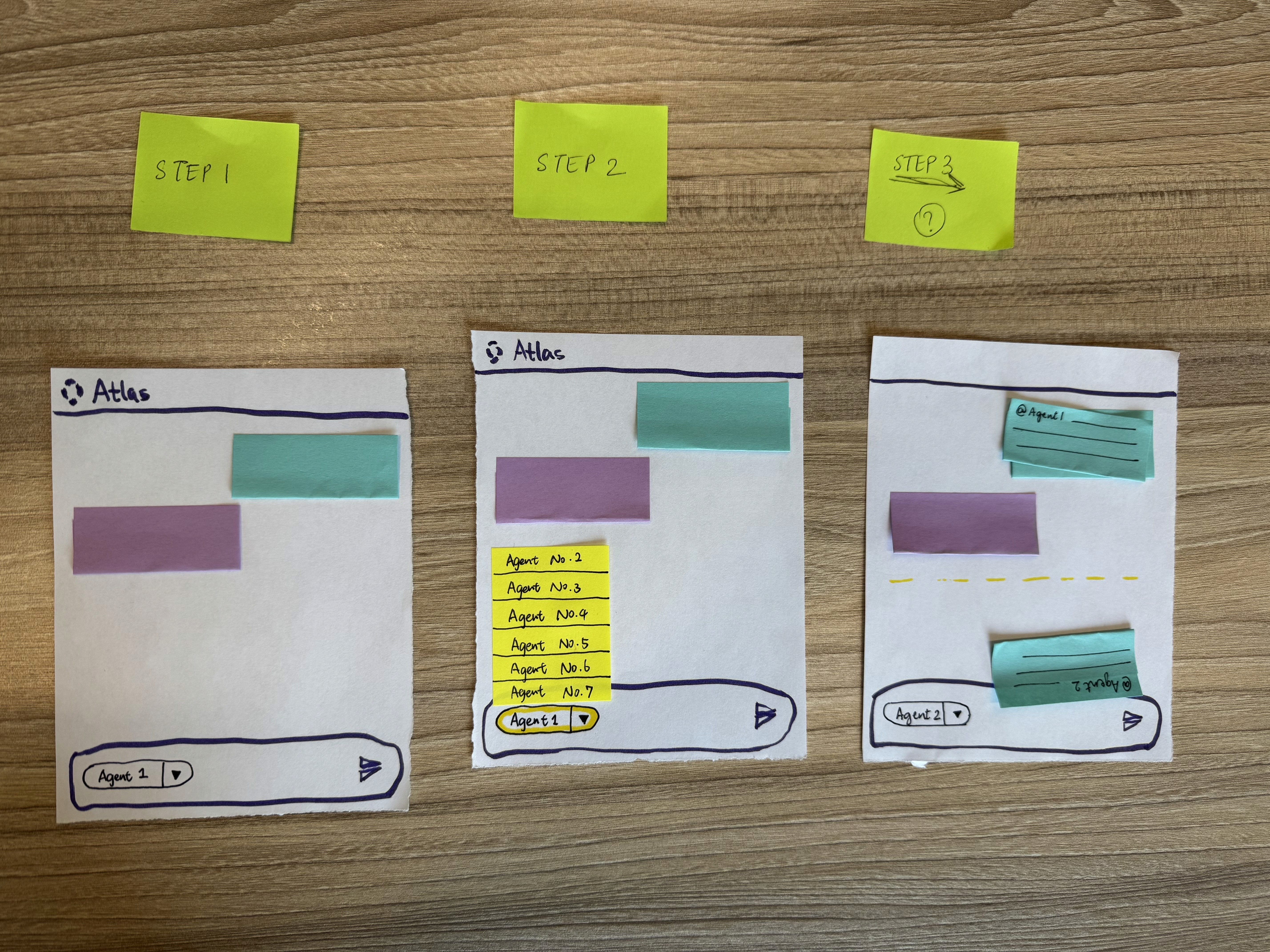

The familiar pattern — selecting a model at the top of the chat window before the conversation starts — was designed for single-assistant switching, where the "who am I talking to?" question is settled before anything is said. In Atlas, an engineer investigating an incident needs to pull in a troubleshooting agent partway through a conversation, without abandoning the thread they've already built. The pre-conversation selector pattern would either force a session restart or create a confusing "replace vs. add" ambiguity each time.

Inline invocation — calling a named agent at the point in the conversation where its capability becomes relevant — keeps the thread coherent and makes agent selection a contextual act rather than a session setup choice. We ran a direct comparison test rather than defaulting to the familiar pattern. The test gave us data to defend the unfamiliar choice to engineering stakeholders who had strong prior expectations, and it surfaced a specific confusion point (users unsure whether invocation replaced or added to the current agent) that shaped the final interaction design.

03 — Interaction modes

A side pane conversation is right for synchronous, exploratory work — but not all agent interaction is synchronous. Some tasks require invoking an agent contextually from the work surface itself. Others are long-running: deep research that needs a plan alignment before kicking off, running in the background while the user continues other work, surfacing completions and decisions asynchronously.

Low-code Agent Builder

Agent building designed for industrial SMEs — not AI engineers. The design challenge: domain experts understand the task but not the AI. The builder had to make agent configuration feel like task configuration — approachable, testable, and enterprise-ready from the start.

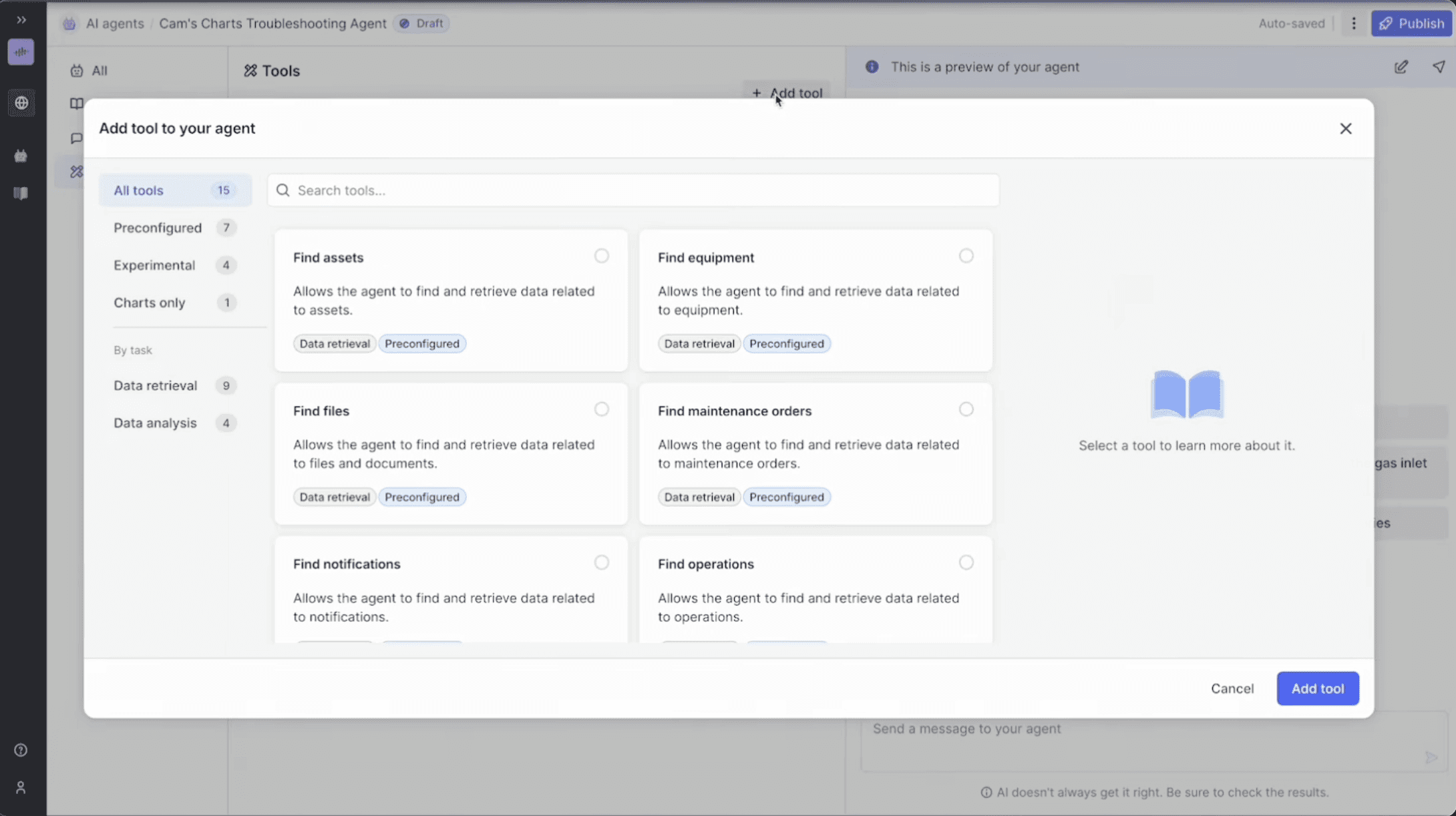

The prototype shows an SME building a maintenance agent from scratch — browsing tools by task type, configuring data scope inline, testing in the persistent preview — without writing a single prompt. Three design decisions underpin this.

01 — Approachability

Industrial SMEs think in capabilities, not AI primitives. Organising the builder around named tools — rather than prompt boxes — means a maintenance engineer configures around the task they know, not the technology they don't. Templates based on real industrial use cases give them a starting point rather than a blank slate.

02 — Build–test–revise loop

The preview panel is always visible alongside the config — not a separate step you reach after finishing. LLM selection surfaces benchmarks filtered to the task type, not global scores an SME can't act on.

03 — Enterprise readiness

An agent that works isn't enough — it needs to be scoped and trusted at an organisational level. Access control is designed around both role and data scope.

Agent Evaluations

A structured way to know — not feel — that the agent is ready. Define test cases with expected answers, run them against a specific agent version, get pass/fail per case. Publishing becomes evidence-based.

Built as working software rather than a Figma mock — interdependent state across configuration, runs, and drill-downs couldn't be adequately simulated with static interactions.

02 — Reusable evaluations

Keeping evaluations separate from agent building enables separation of duties — the person curating test sets doesn't have to be the agent builder. Eval sets are reusable across agent versions and maintainable independently.

Atlas AI launched as a genuinely differentiated product in the industrial AI agent market — proving ROI on agentic systems ahead of the market becoming crowded, and achieving commercial success beyond projections.

Early metrics from actual users proved value beyond doubt, with ~90% reduction in user time on task for complex industrial work like root cause analysis — 6 hours instead of 6 weeks. Usability testing showed that first-time users without prior AI expertise were able to build a working agent in less than an hour.

The success of Atlas didn't stop at the product itself — it laid the foundation for a completely new AI-native strategy across the entire portfolio of products at Cognite.

The 0→1 nature of this project meant that design had outsized influence on what the product became — not just how it looked. Leading with UX strategy before any UI meant the team was solving the right problem. The foundational principles around collaboration, curated outcomes, and build-test-revise weren't just design guidelines; they became engineering priorities.

The team itself was forming as we were building — new engineers, PMs, designers, and stakeholders joining at pace, each with their own context and assumptions. Being a consistent voice and anchor through that flux was as much a part of the leadership role as any design artefact. When the team or the market shifted around us, a clear point of view on what we were building and why kept execution from fragmenting.

Vibe coding as a design tool was a genuine accelerant — being able to show a "live" evaluations flow rather than a static prototype changed the quality and speed of PM/engineering alignment.