Watson Discovery: Democratizing AI for business users

Delivering the MVP of a strategic pivot for an established enterprise AI product — evolving it from a developer tool to a low/no-code platform for citizen builders.

Delivering the MVP of a strategic pivot for an established enterprise AI product — evolving it from a developer tool to a low/no-code platform for citizen builders.

Watson Discovery v1 was one of IBM's flagship enterprise AI products — the first GUI to let companies build AI search and analytics on unstructured data. But it was built for AI developers who understood data pipelines, model retraining, and natural language processing.

The v2 mission: reach across the enterprise to business teams who needed AI without requiring coding or AI expertise. The product had to evolve its user base, its mental model, and its entire experience — without losing what made it powerful.

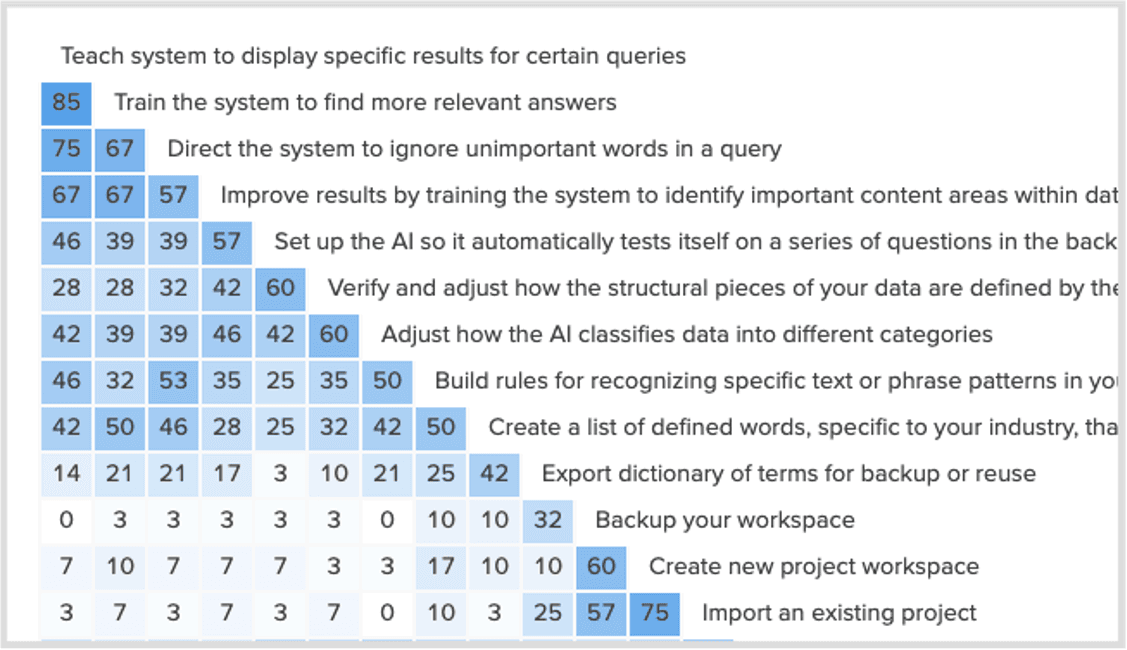

I led PM, Engineering, and Research across all 4 product areas to collaboratively build a "to-be" journey map for the citizen builder workflow. Every squad could see how their piece fit into the full picture — spotting constraints early and orienting delivery around a shared goal instead of individual feature backlogs.

I paired closely with PMs to translate the journey map into IBM hills & epics, then defined 2 hero moments as concrete innovation targets — giving every squad clarity on what "great" looked like and preventing delivery from fragmenting into isolated features across 150 engineers.

I defined an aspirational AI experience vision — grounded in business opportunity, the user landscape, and Watson's technical capabilities — that kept long-term coherence intact across releases, so short-term delivery decisions never lost sight of the end-state.

With 150 engineers across 4 product areas, there were infinite ways to innovate. These two hero moments gave every squad a concrete innovation target — keeping the experience coherent and delivery from fragmenting into isolated features.

A guided approach to iteratively improve AI training accuracy — making the feedback loop visible and actionable for non-experts.

An easy way to get started with minimal effort to reach the first moment of value — dramatically lowering the barrier to entry for citizen builders.

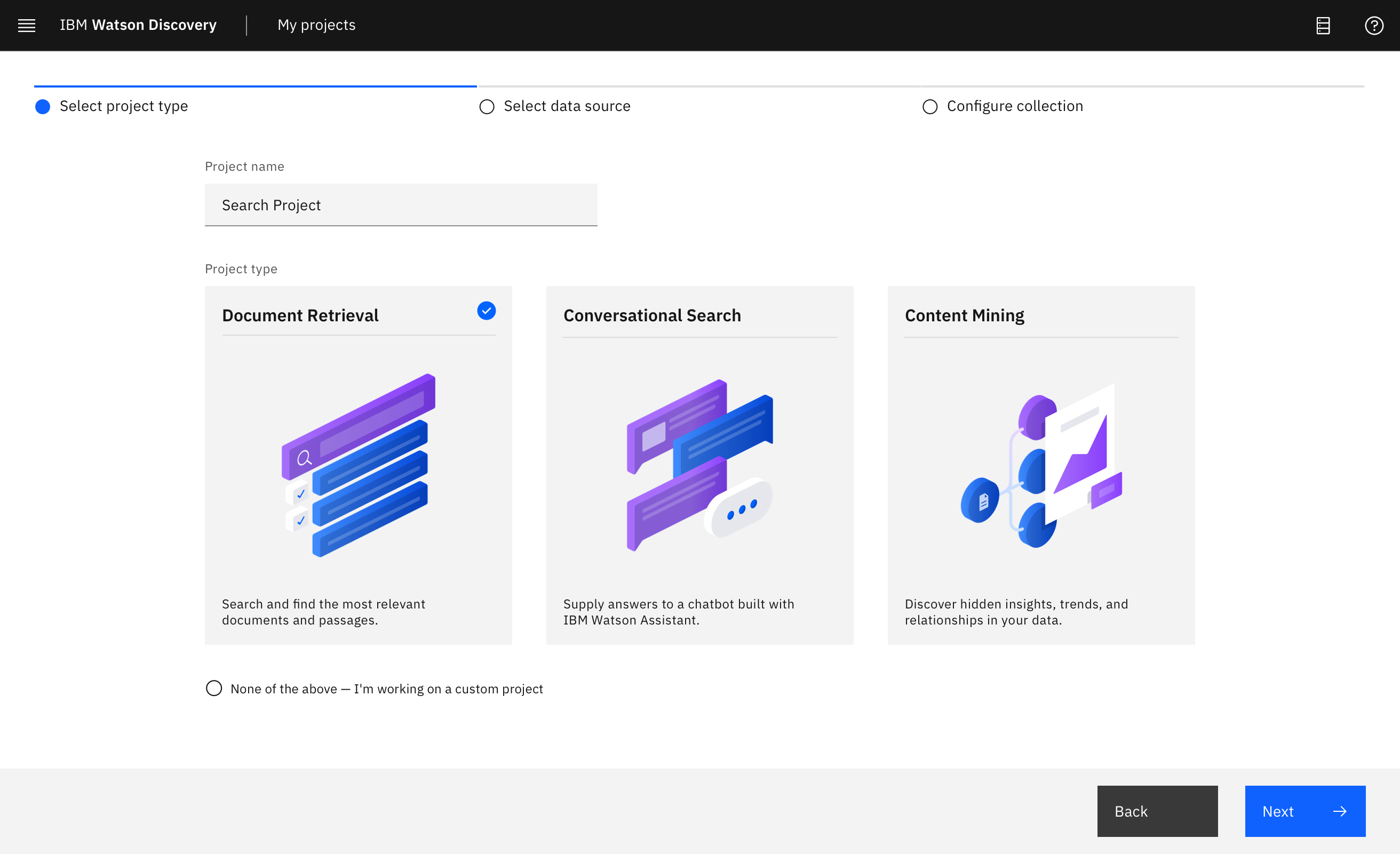

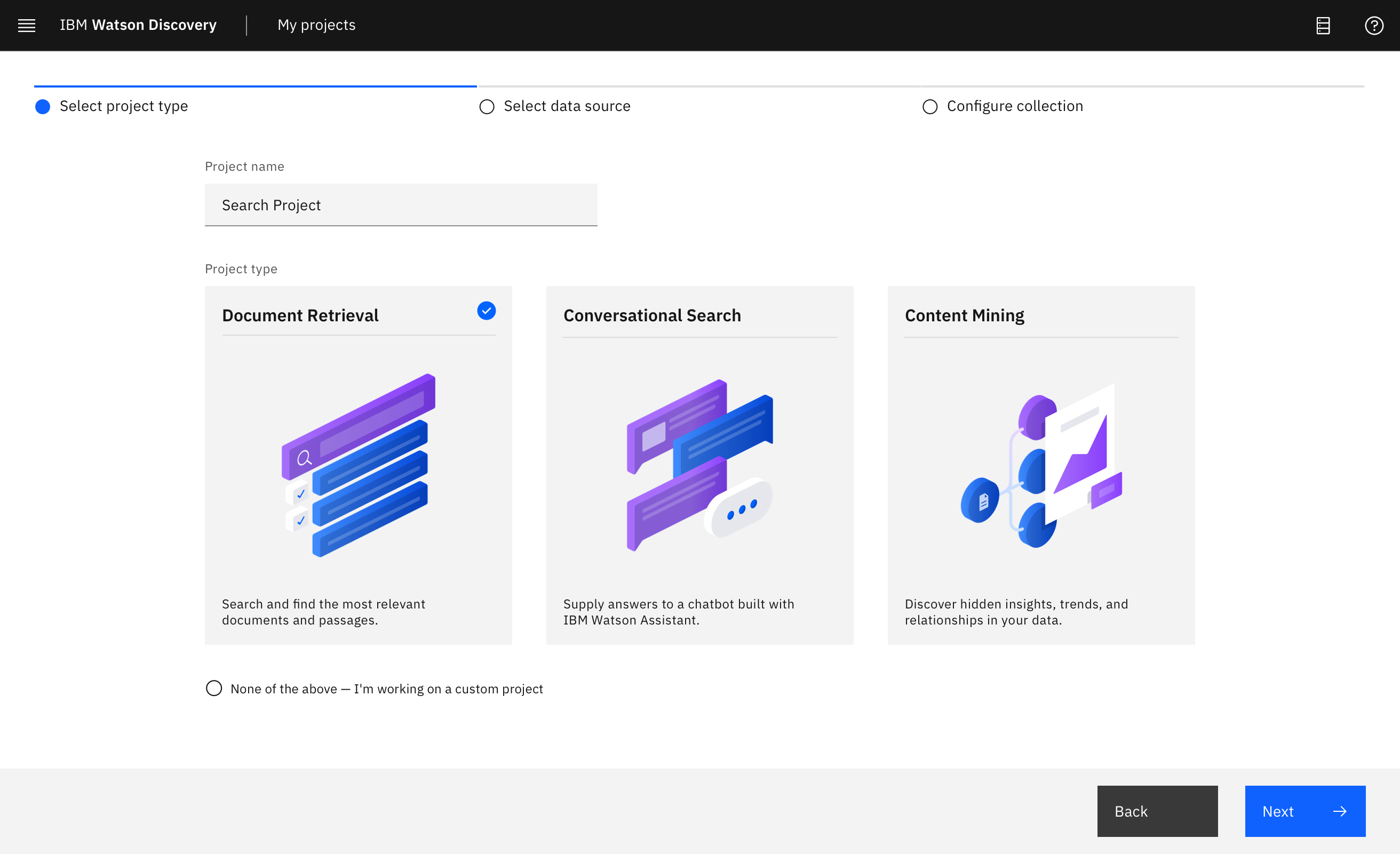

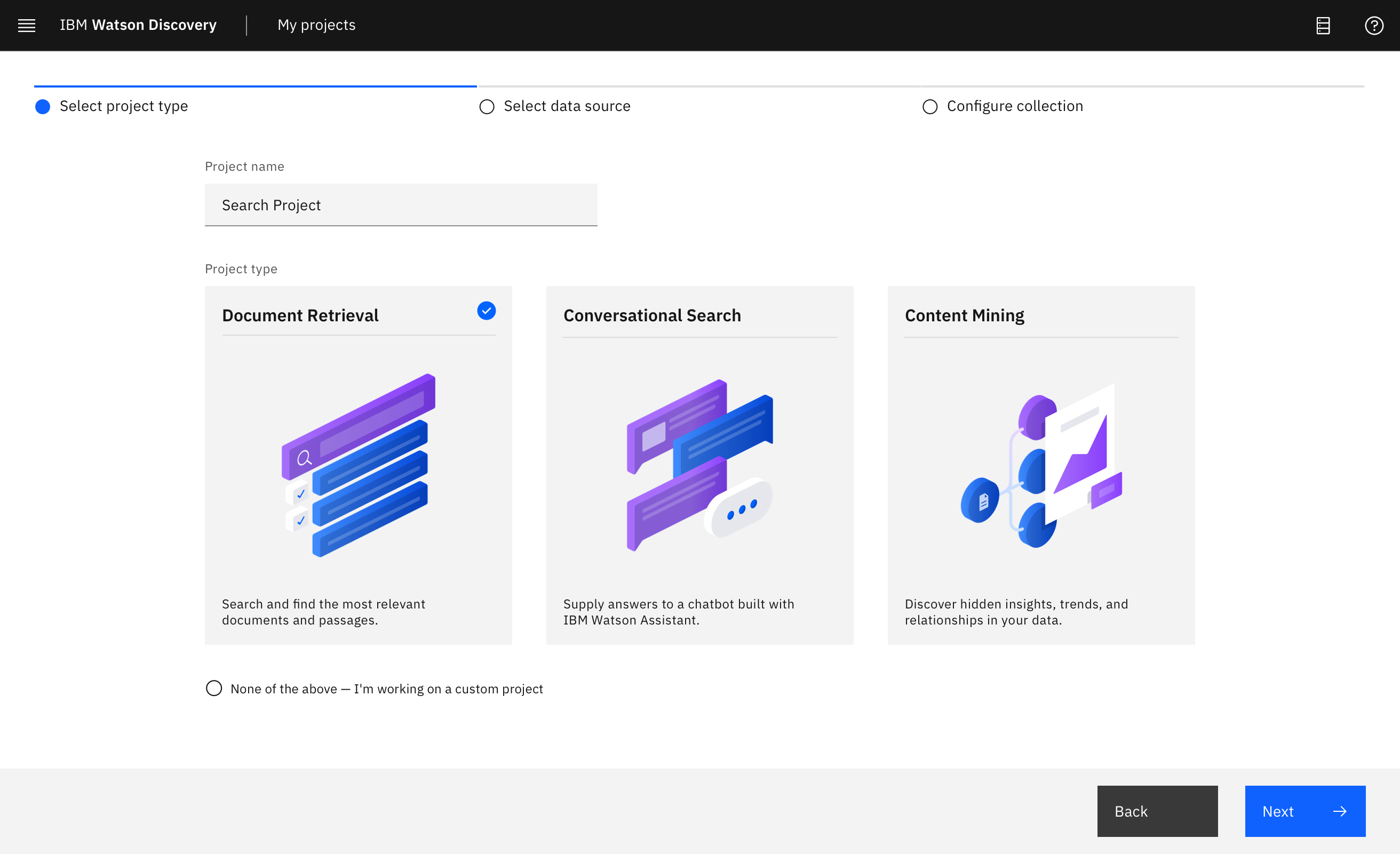

Projects to guide workflows

Projects turned a developer configuration tool into an outcome-driven experience. Choosing a project type helped users think about the end goal they were trying to achieve — and the product met them there, with recommendations and previews, instead of a wall of settings.

Success of the Projects concept depended on how well we mapped projects and outcomes to various configuration flows in Watson Discovery. I collaborated closely with engineering to map this through system flowcharts, acceptance criteria, API reviews, and test cases.

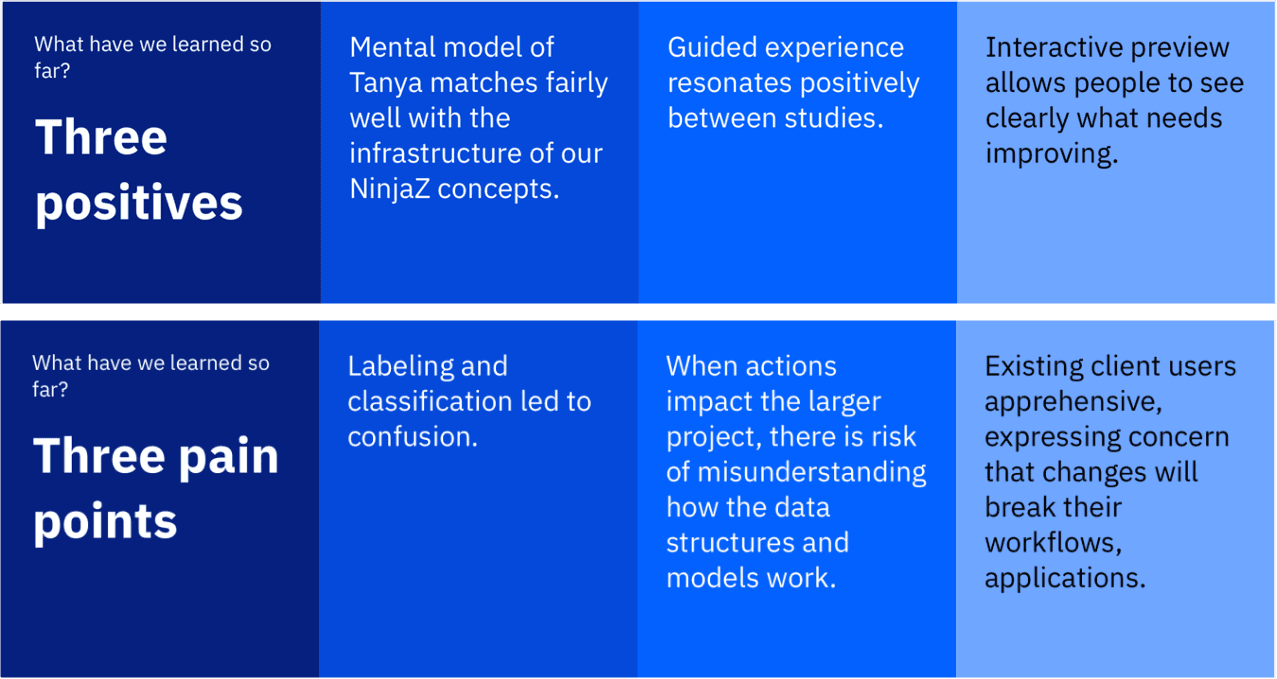

Given how foundational this concept was to the redesign, the design team ran multiple rounds of validation with users. The concept resonated — but users' mental model of the configuration steps was unclear. We iterated heavily on terminology until the UI copy was unambiguous.

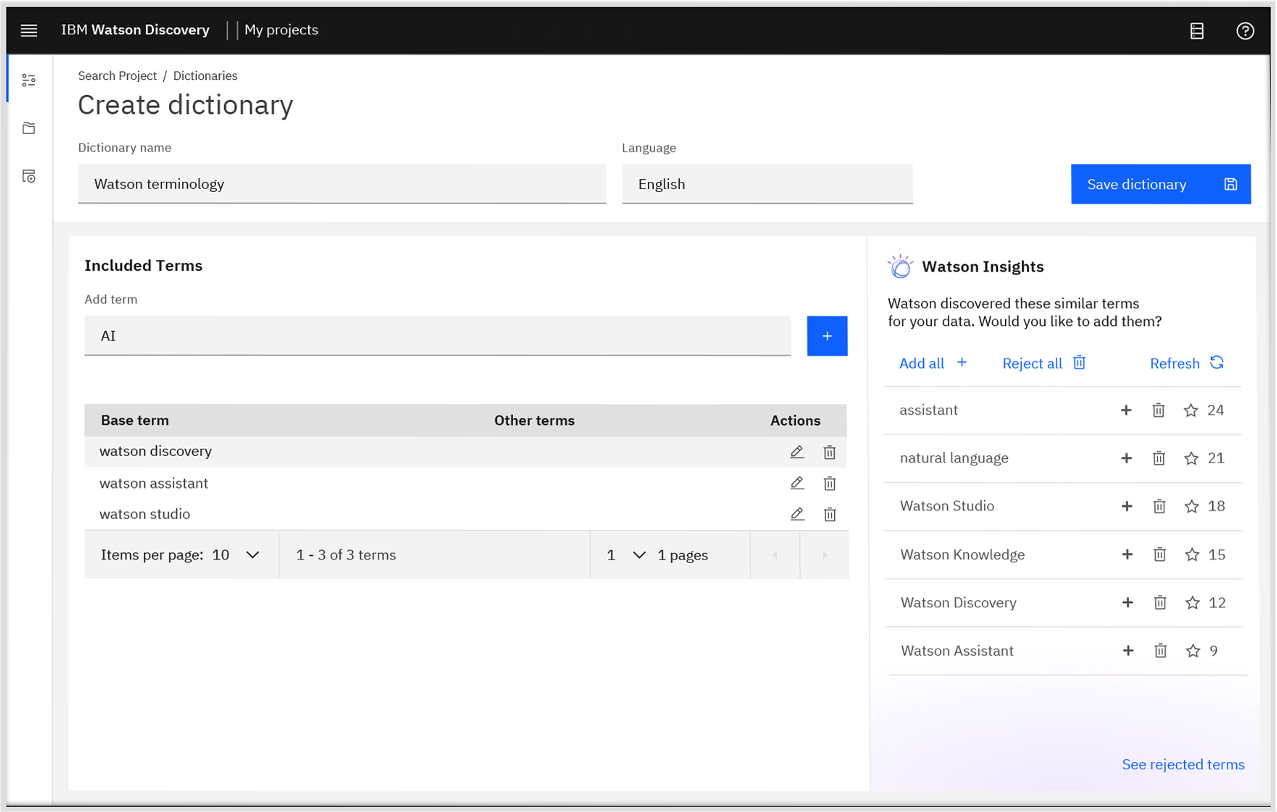

Domain vocabulary training

Few-shot NLP training productized as a builder-friendly workflow. The core challenge: powerful research capability, but only valuable if users understood what it did and why it mattered — and could act on it without AI expertise.

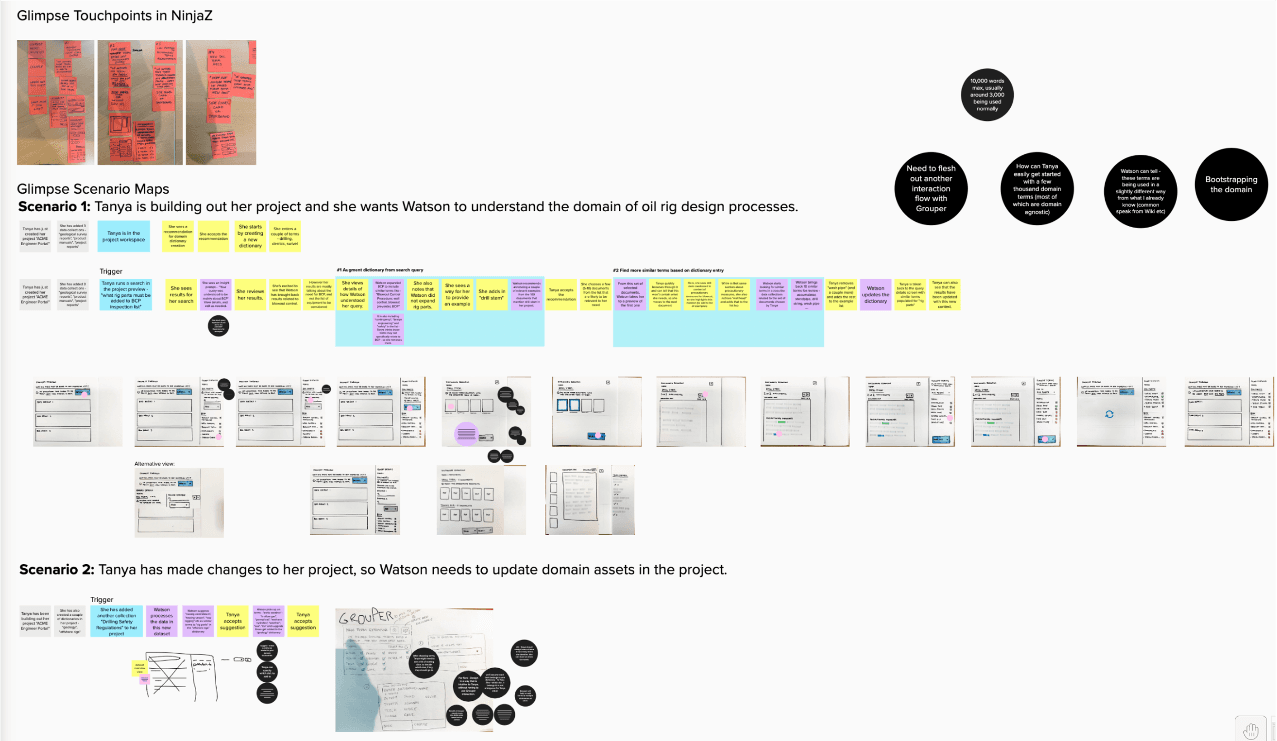

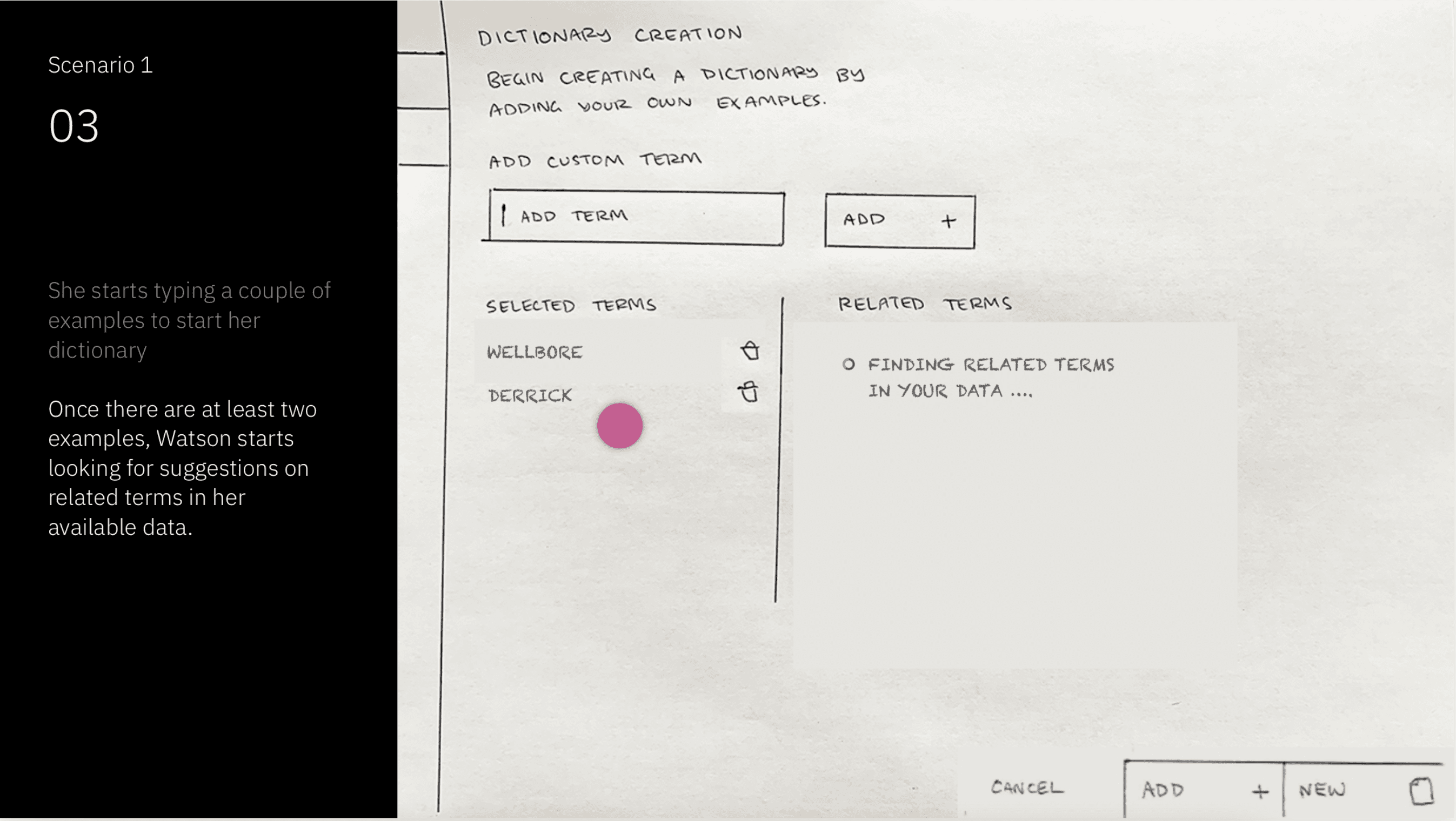

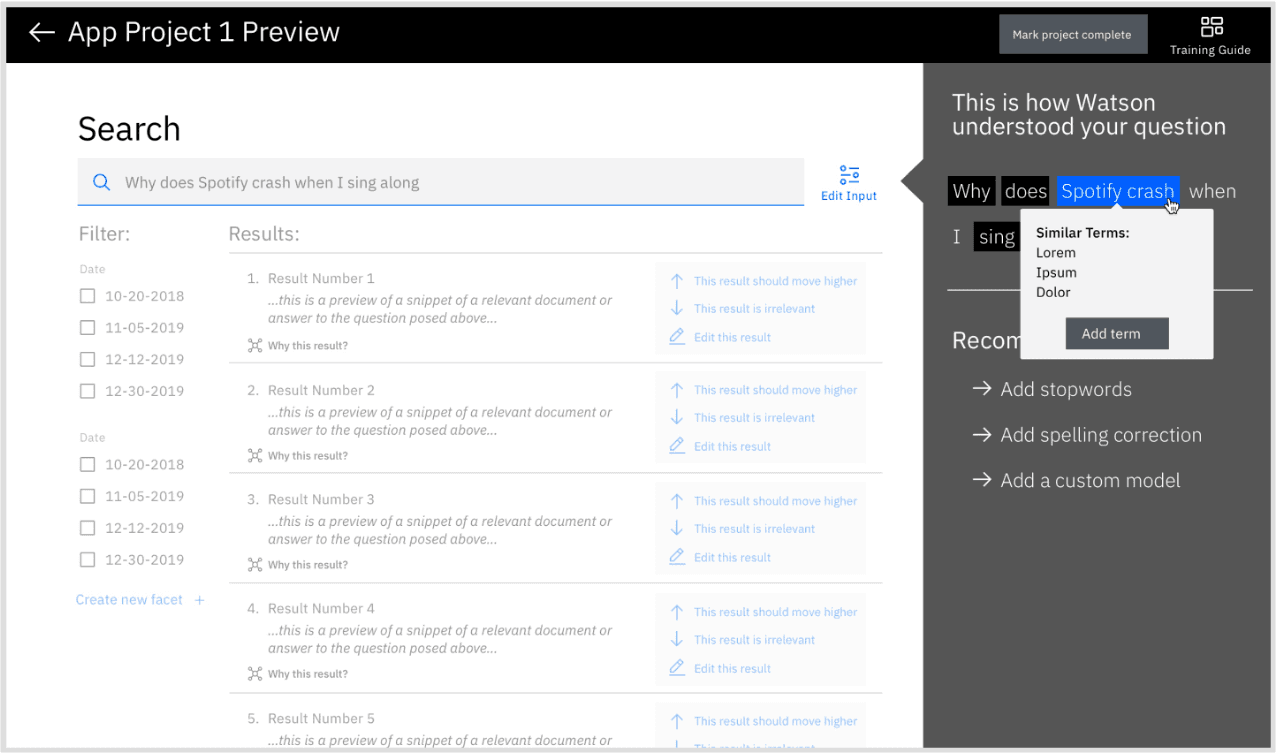

I explored various ways to productize this asset from the Research organization. While the technical innovation was strong, fitting it into a user's workflow — so it would provide measurable value — was the critical design challenge.

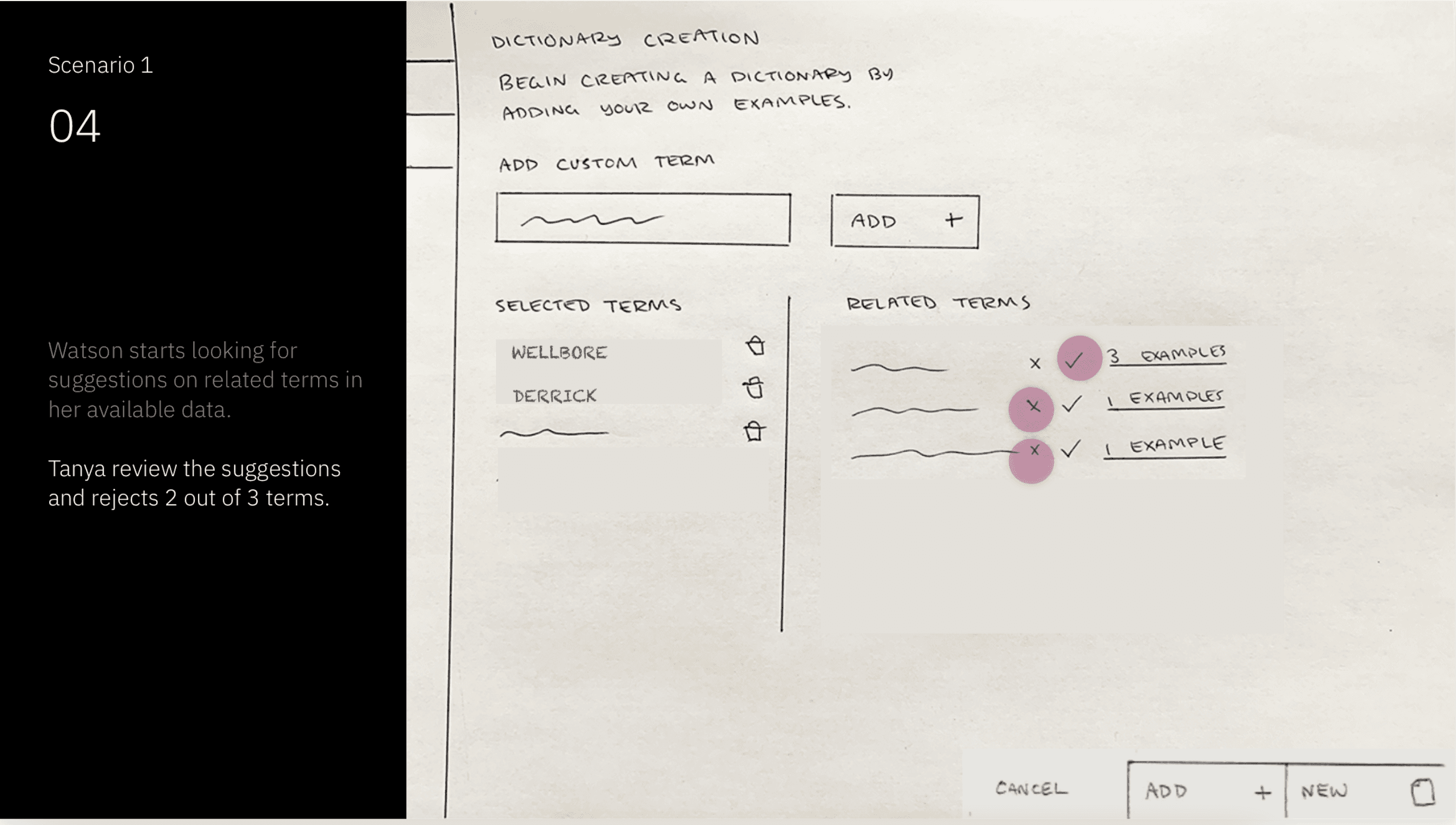

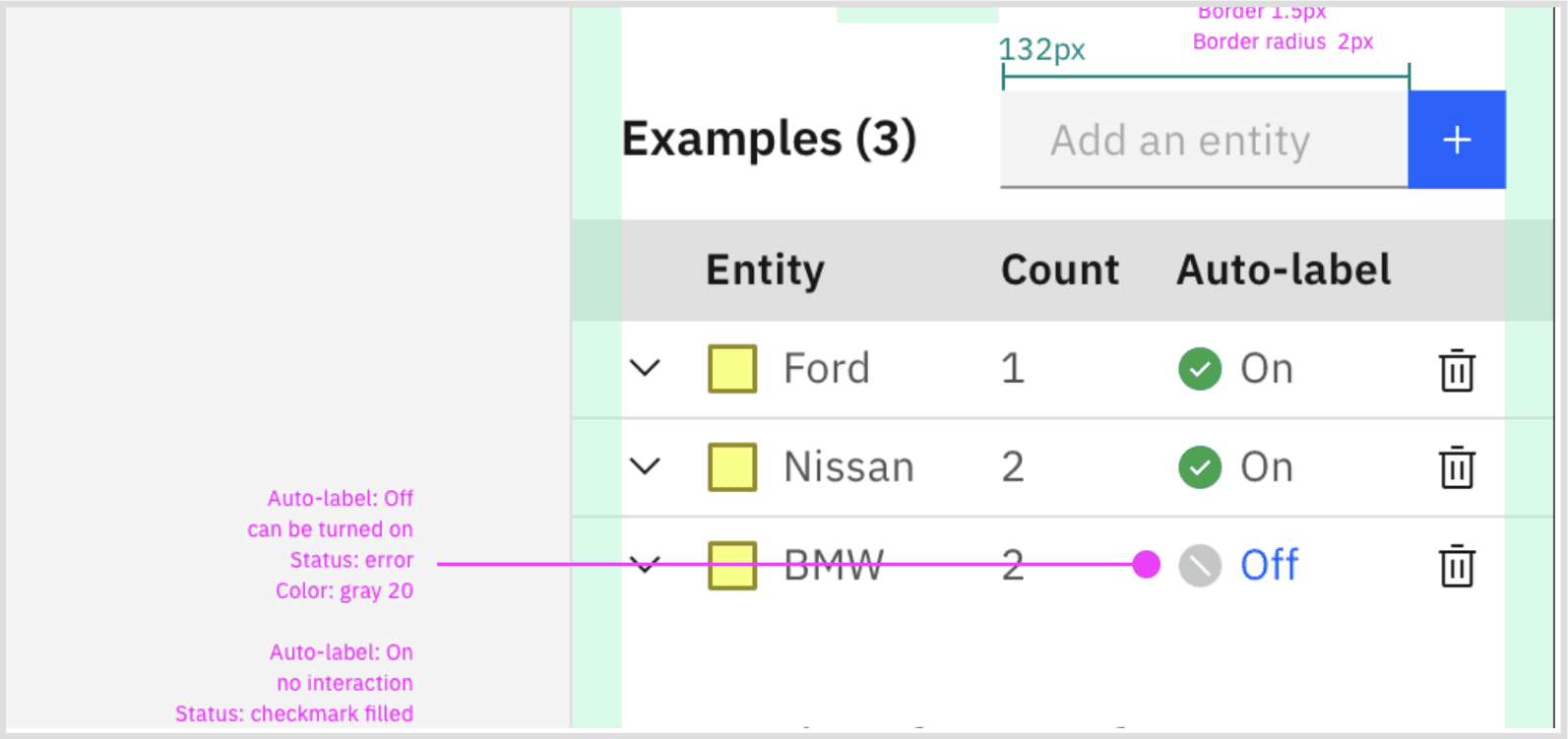

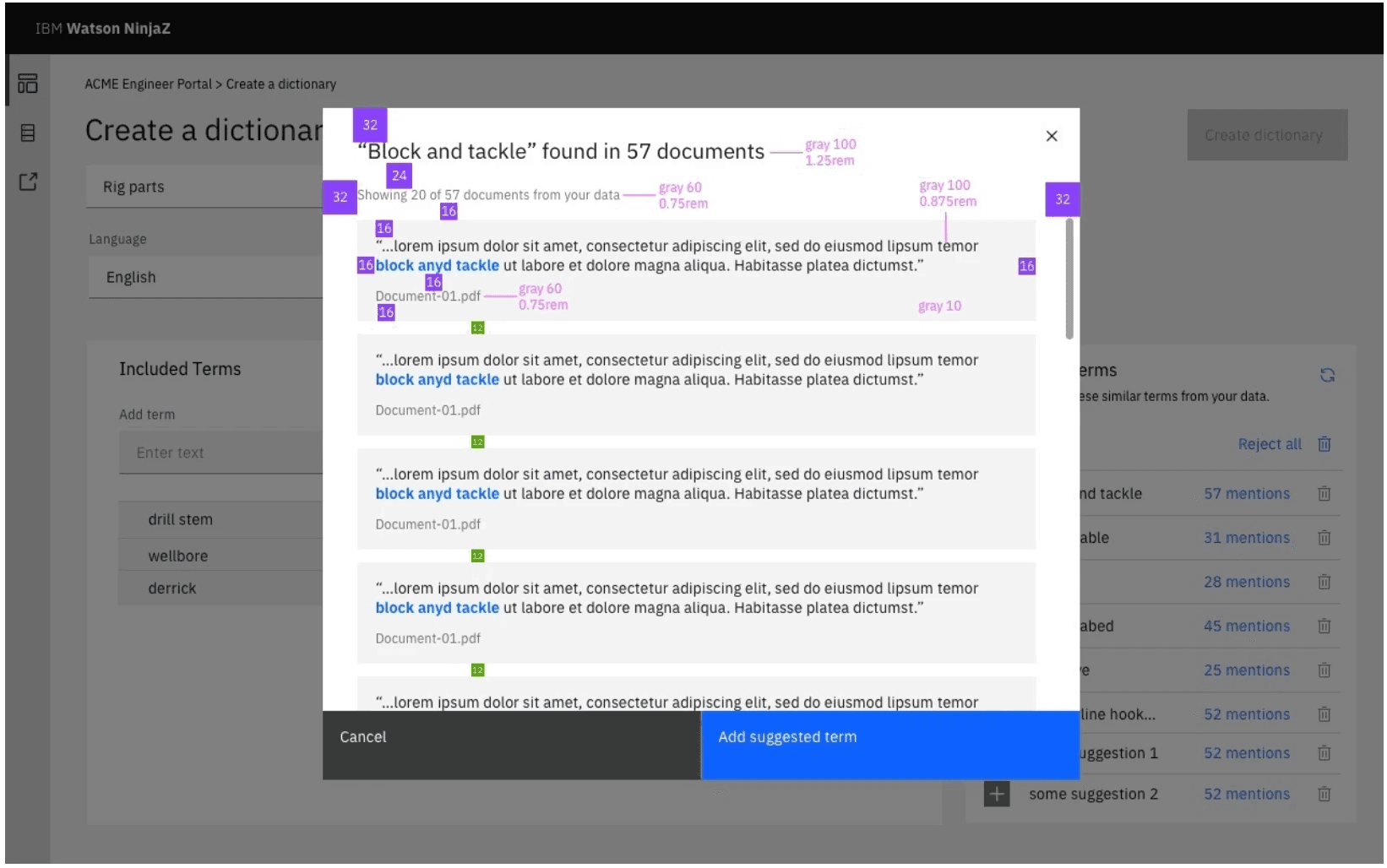

I collaborated with Research team to explore various contextual ways to seed the NLP model through natural touch points across a user's workflow. We wanted to reduce the burden of another extra task for the user as much as possible. Ease of accepting, rejecting and revising terms was important both from a UX perspective and for technical reasons - critical for building confidence.

The 5-term threshold was a technical constraint we designed around. Making this invisible to the user while keeping the experience feel effortless was the core UX challenge.

These interaction design explorations look at how the capability could be extended further — moving beyond manual term entry by auto-identifying domain-specific terms directly from documents. This would reduce human effort substantially while preserving user control: surfacing suggested terms in context so builders can review, accept, or dismiss with confidence rather than starting from scratch.

Key open questions the explorations had to address:

Watson Discovery v2 shipped as a genuinely transformed product — not just a UI refresh, but a fundamental rethinking of who enterprise AI tools are built for.

The pivot to citizen builders was validated in the market: business teams now able to build AI apps without developer expertise, reaching a new user group that v1 had never served. The product was recognized externally as an example of design-led innovation at enterprise scale — FastCo 2020 Innovation By Design Award.

The work aligned 150 engineers, 13 PMs, and 9 designers across 4 product areas in the US and Japan around a single coherent experience — delivered in 9 months.

The scale of this project meant that alignment artifacts carried as much weight as the UI itself. The to-be journey map and the hills framework weren't just strategy tools — they were the shared language the team used to make decisions for nine months.

Leading with user research to identify the new user group before touching any UI meant we avoided the most common failure mode for enterprise product pivots: building for imaginary users. The usability testing on terminology was a reminder that even well-conceived concepts can fail on language alone.