CDF v3: AI native industrial platform

Reimagining the complete Cognite product family for a world where AI is native to how industrial workers think and act.

Reimagining the complete Cognite product family for a world where AI is native to how industrial workers think and act.

Cognite Data Fusion (CDF) is the industrial data and AI platform that powers the full suite of Cognite products. It enables industrial workers to find and work with any kind of information — across engineering, operational, and informational data.

After successfully building and maturing Atlas — a full-fledged industrial AI agent platform on top of CDF — the question shifted from "how do we add AI" to "what if AI was intrinsic to the entire platform experience?" Not AI features bolted onto existing workflows, but a fundamental rethink of what industrial work looks and feels like when AI is a native part of how users reason and act.

The design organisation took up this challenge. In six weeks, we created a revamped vision for the future of CDF — completely rethinking all industrial user experiences. We also rethought our own design workflows, embedding AI tools like Cursor and v0 as a first-class part of how we explored ideas.

The team spanned multiple product lines, design skills, and experience levels. I planned the sprint structure to open with a deliberate research soak — synthesizing existing user insights and running lean generative research with real customers. This revealed unmet needs in industrial contexts alongside honest accounts of what was and wasn't working with AI in practice. The shared foundation meant the team could diverge with genuine purpose, not just intuition.

I distilled the research findings into a focused set of usage scenarios to prioritise, then led the team in mapping end-to-end user workflows across them. Having already built Atlas, I understood both the possibilities and limitations of the underlying tech — generally and within Cognite's stack. This let me identify the right areas of innovation: where technology could genuinely meet user needs better, and where it couldn't yet be trusted to.

I led the full research effort across multiple validation phases, culminating in ~150 on-site sessions at Impact 2025. The vision was tested with real industrial users — field workers, engineers, and maintenance experts — not just internal stakeholders. The research strongly validated the strategic direction while surfacing nuanced context that continued to shape specific focus areas as the work evolved.

We started by synthesizing accumulated user research on industrial workflows — not to validate existing features, but to identify where AI could genuinely elevate the work. A key focus was mapping how tools, processes, and people connect within core industrial scenarios — and where those connections break down, create friction, or lose context. The underlying hypothesis: that AI, applied foundationally across the platform rather than as a feature layer, could be what finally ties these together into effective, coherent industrial work.

From research synthesis, we moved quickly to low fidelity — sketching tentative solution flows to identify the most promising directions before investing in anything more detailed. The goal was enough shared understanding across the team to diverge meaningfully.

From low-fi, the team diverged and built vibe coded explorations — some focused on end-to-end flows, others on the nuances of specific AI micro-interactions. Critically, vibe coding enabled us to prototype a near-functional system with real LLMs under the hood — essential for faithfully replicating the inherent variability of AI. This meant we weren't designing for a controlled Figma prototype where every response is predetermined, but for a live, unpredictable system — which surfaced genuinely different UX problems and helped us work honestly with both the strengths and constraints of AI native experiences.

Having a live, LLM-powered prototype also meant that both internal stakeholders and real users could interact with the system directly and give feedback on the actual AI UX — not on a simulation of it. This shaped both our design decisions and our research approach in ways a Figma prototype never could have.

I put the prototype directly in front of real industrial users — field workers, engineers, maintenance experts, and digital transformation champions — people who would actually use something like this on the job.

Participants used the live prototype freely, letting us observe how they naturally approached tasks: did they lean toward search or automation? What expectations did the system miss? A Figma simulation couldn't have surfaced the same signal.

The approach was mixed-methods across three phases: moderated sessions for depth, unmoderated self-service for scale, and on-site at Impact for breadth. Key research questions: Can users trust AI for business-critical work? What do they expect from a natural language interface? Does this have the pull to become a daily-use tool?

The results strongly validated the strategic direction — and the nuanced context that emerged kept shaping focus areas and prioritisation well beyond the sprint.

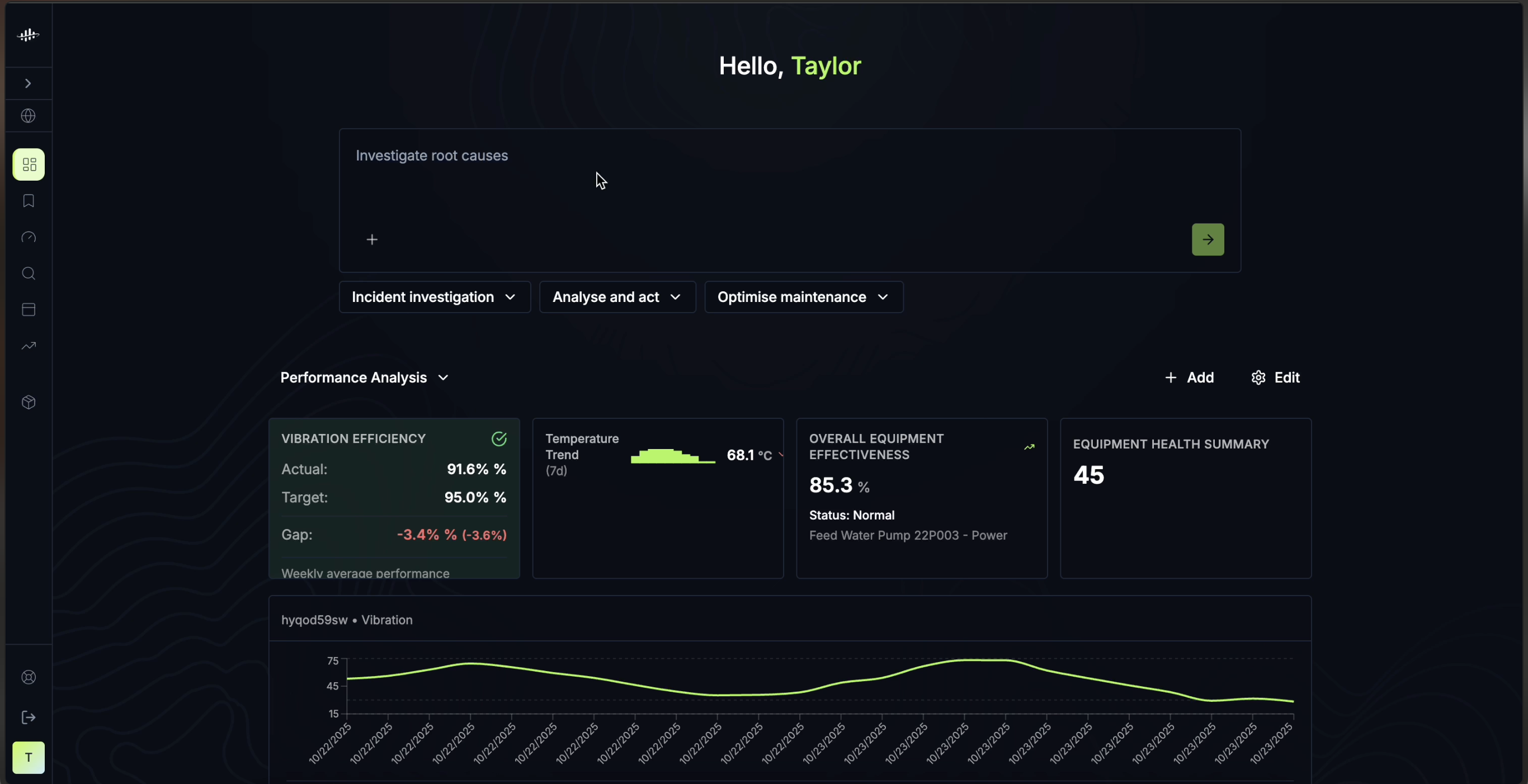

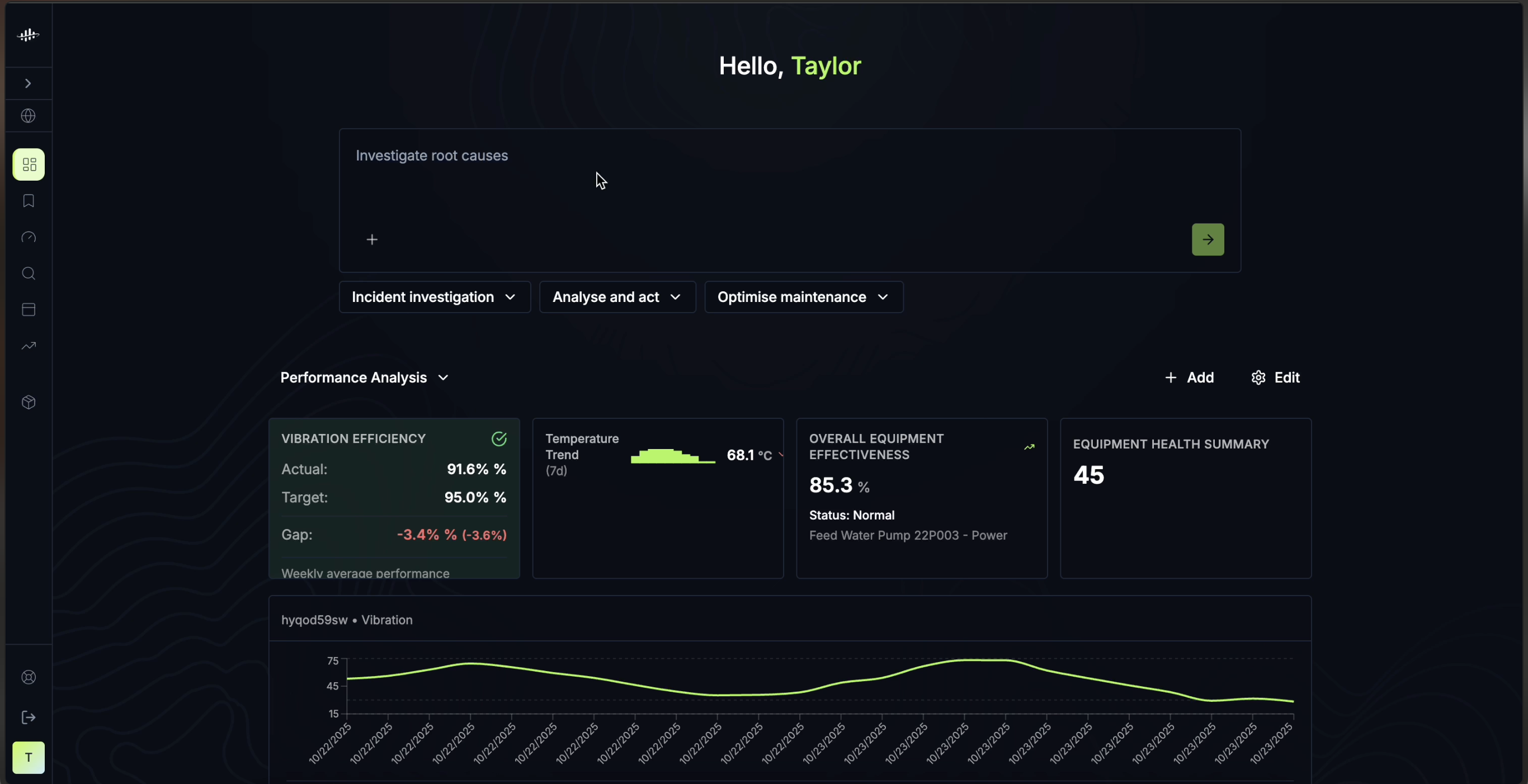

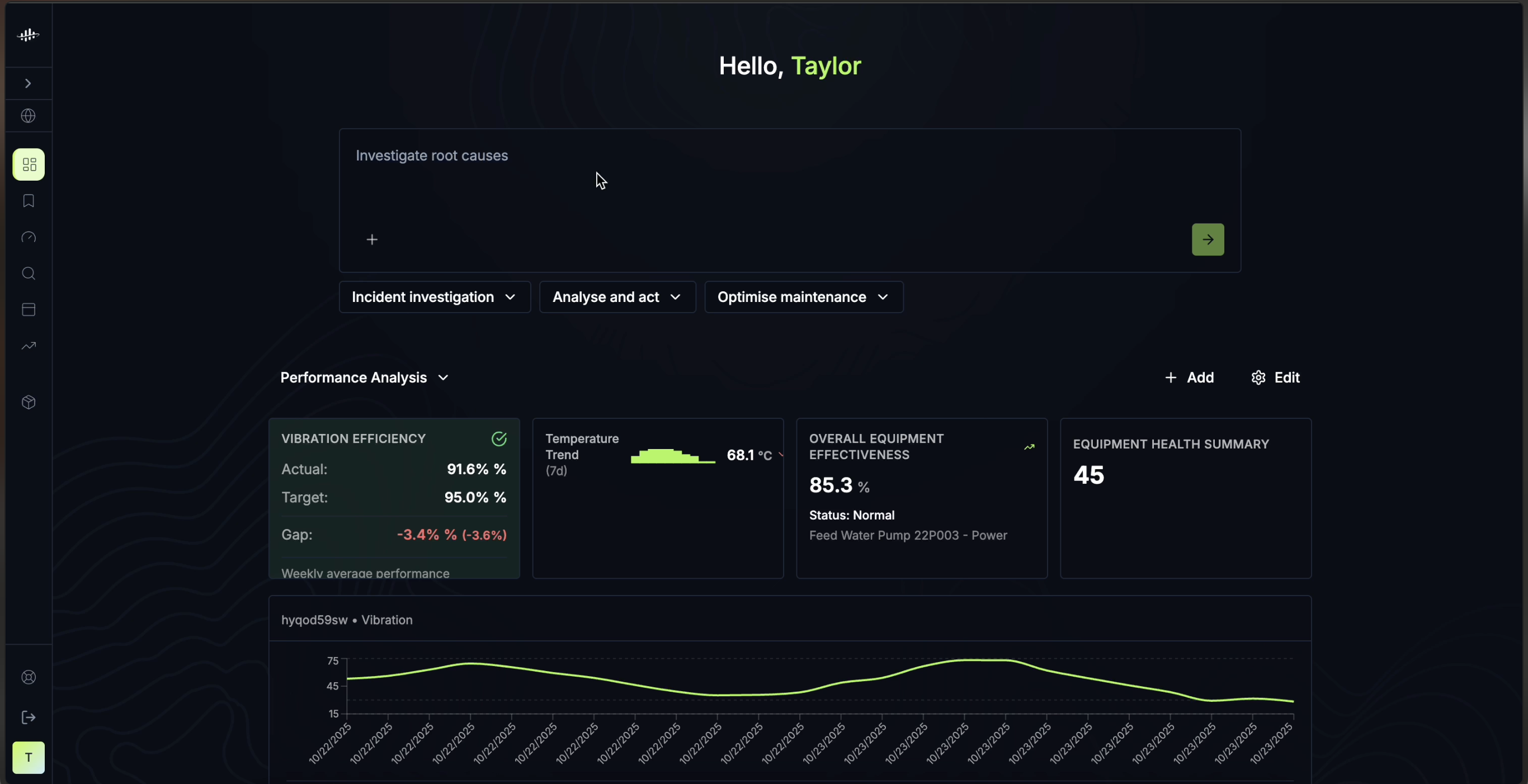

My specific design focus was AI UX interactions — the problem of how a system communicates its interpretation of what a user is asking, and how users can guide or course-correct it before it acts rather than after. In most agentic systems, a user submits a request and waits for a full response to judge whether the agent understood their intent. By that point the system has already done work — and any misalignment means starting over. The question I wanted to explore: how might we surface the system's interpretation early, so users can steer it at the moment of input, not after the fact?

When a user submits a request, the system surfaces signals of how it has interpreted the ask — which entities it recognised, what kind of response it's planning, which data sources it intends to draw from. Users can confirm, adjust, or redirect inline, before the agent runs — reducing the cost of misalignment without adding friction to the happy path.

This iteration included a micro-interaction where the system continuously re-interpreted the query as the user typed — surfacing interpretability signals in real time. In practice the constant updating was too jumpy and was dropped in the next iteration. A vibe-coded prototype surfaces this kind of problem immediately and viscerally; a Figma prototype, where every state is hand-crafted and perfectly timed, never could.

A chat interface is only as useful as the mental model a user brings to it. For first-time users of an AI-native industrial platform, that model doesn't exist yet — they don't know what to ask, what the system already knows, or what kinds of work it can actually do. To address this, I explored a micro-interaction where the chat input bar cycles through example queries drawn from real industrial workflows: a troubleshooting question, a maintenance planning task, an incident investigation prompt. The cycling isn't decorative — it's functional, showing users what the system already understands about their world and signalling the range of ways they can put it to work. The goal was to bootstrap that mental model at the moment of first contact, without requiring any onboarding.

Once the interaction model was established, I extended the prototype to cover a full end-to-end industrial workflow — testing how the interpretability and steering patterns held up across multiple steps, data types, and agent handoffs. This gave PM and Engineering a live, walkable representation of the complete experience to react to, not a described concept.

Launched the new UX direction for the full product portfolio at Cognite — validated directly with ~150 real users at Impact 2025, who strongly confirmed the strategic direction.

The prototype became the primary artefact for Design, PM, and Engineering leaders to map out a detailed innovation and product roadmap across all product areas — giving the organisation something concrete to build from, not a slide deck to interpret.

Established vibe coding as a first-class design tool within the team — proving that live, LLM-powered prototypes drive faster and more honest alignment than static artefacts.

In six weeks, we successfully pivoted the whole company toward an AI-native strategic future — driven by user experience and tangible value, not just technology ambition.

Strategic vision explorations live or die by their concreteness. Principles and frameworks are necessary, but what actually aligns an organisation is something people can hold and react to. The combination of fast divergent exploration with live prototypes gave this work that quality — it wasn't a slide deck, it was something people could walk through and challenge.

The six-week constraint was a forcing function that prevented the work from becoming overspecified. The right output for a strategic vision exploration is a clear point of view — grounded in user insight, specific enough to drive real decisions — not a finished product.